Python For Data Science Cheat Sheet PySpark - SQL Basics Learn Python for data science Interactively at www.DataCamp.com DataCamp Learn Python for Data Science Interactively Initializing SparkSession Spark SQL is Apache Spark's module for working with structured data. from pyspark.sql import SparkSession spark = SparkSession.builder. Apache Spark & Scala; Search for. Scala Iterator: A Cheat Sheet to Iterators in Scala. Stay updated with latest technology trends Join DataFlair on Telegram!!

Spark NLP Cheat Sheet

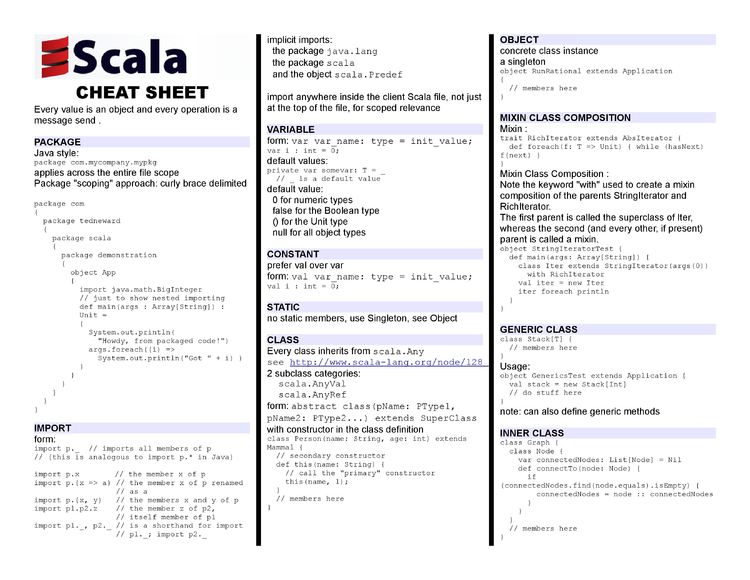

Scala Cheatsheet Thanks to Brendan O’Connor, this cheatsheet aims to be a quick reference of Scala syntactic constructions. Licensed by Brendan O’Connor under a CC-BY-SA 3.0 license.

Python

Quick Install

Let’s create a new Conda environment to manage all the dependencies there. You can use Python Virtual Environment if you prefer or not have any enviroment.

Of course you will need to have jupyter installed in your system:

Now you should be ready to create a jupyter notebook running from terminal:

Start Spark NLP Session from python

If you need to manually start SparkSession because you have other configuraations and sparknlp.start() is not including them, you can manually start the SparkSession:

Scala and Java

Maven

Our package is deployed to maven central. In order to add this packageas a dependency in your application:

spark-nlp on Apache Spark 3.x:

spark-nlp-gpu:

spark-nlp on Apache Spark 2.4.x:

spark-nlp-gpu:

spark-nlp on Apache Spark 2.3.x:

spark-nlp-gpu:

SBT

spark-nlp on Apache Spark 3.x.x:

spark-nlp-gpu:

spark-nlp on Apache Spark 2.4.x:

spark-nlp-gpu:

spark-nlp on Apache Spark 2.3.x:

spark-nlp-gpu:

Maven Central: https://mvnrepository.com/artifact/com.johnsnowlabs.nlp

Google Colab Notebook

Google Colab is perhaps the easiest way to get started with spark-nlp. It requires no installation or setup other than having a Google account.

Spark Scala Cheat Sheet Pdf

Run the following code in Google Colab notebook and start using spark-nlp right away.

This script comes with the two options to define pyspark and spark-nlp versions via options:

Spark NLP quick start on Google Colab is a live demo on Google Colab that performs named entity recognitions and sentiment analysis by using Spark NLP pretrained pipelines.

Kaggle Kernel

Run the following code in Kaggle Kernel and start using spark-nlp right away.

Spark NLP quick start on Kaggle Kernel is a live demo on Kaggle Kernel that performs named entity recognitions by using Spark NLP pretrained pipeline.

Databricks Support

Spark NLP 3.0.2 has been tested and is compatible with the following runtimes:

- 5.5 LTS

- 5.5 LTS ML & GPU

- 6.4

- 6.4 ML & GPU

- 7.3

- 7.3 ML & GPU

- 7.4

- 7.4 ML & GPU

- 7.5

- 7.5 ML & GPU

- 7.6

- 7.6 ML & GPU

- 8.0

- 8.0 ML

- 8.1 Beta

NOTE: The Databricks 8.1 Beta ML with GPU is not supported in Spark NLP 3.0.2 due to its default CUDA 11.x incompatibility

Install Spark NLP on Databricks

Create a cluster if you don’t have one already

On a new cluster or existing one you need to add the following to the

Advanced Options -> Sparktab:In

Librariestab inside your cluster you need to follow these steps:3.1. Install New -> PyPI ->

spark-nlp-> Install3.2. Install New -> Maven -> Coordinates ->

com.johnsnowlabs.nlp:spark-nlp_2.12:3.0.2-> InstallNow you can attach your notebook to the cluster and use Spark NLP!

Databricks Notebooks

You can view all the Databricks notebooks from this address:

Note: You can import these notebooks by using their URLs.

EMR Support

Spark NLP 3.0.2 has been tested and is compatible with the following EMR releases:

- emr-5.20.0

- emr-5.21.0

- emr-5.21.1

- emr-5.22.0

- emr-5.23.0

- emr-5.24.0

- emr-5.24.1

- emr-5.25.0

- emr-5.26.0

- emr-5.27.0

- emr-5.28.0

- emr-5.29.0

- emr-5.30.0

- emr-5.30.1

- emr-5.31.0

- emr-5.32.0

- emr-6.1.0

- emr-6.2.0

Full list of Amazon EMR 5.x releasesFull list of Amazon EMR 6.x releases

Spark Scala Cheat Sheet Free

NOTE: The EMR 6.0.0 is not supported by Spark NLP 3.0.2

How to create EMR cluster via CLI

To lanuch EMR cluster with Apache Spark/PySpark and Spark NLP correctly you need to have bootstrap and software configuration.

A sample of your bootstrap script

A sample of your software configuration in JSON on S3 (must be public access):

Docker Support

For having Spark NLP, PySpark, Jupyter, and other ML/DL dependencies as a Docker image you can use the following template:

Finally, use jupyter_notebook_config.json for the password:

Windows Support

In order to fully take advantage of Spark NLP on Windows (8 or 10), you need to setup/install Apache Spark, Apache Hadoop, and Java correctly by following the following instructions: https://github.com/JohnSnowLabs/spark-nlp/discussions/1022

How to correctly install Spark NLP on Windows 8 and 10

Follow the below steps:

- Download OpenJDK from here: https://adoptopenjdk.net/?variant=openjdk8&jvmVariant=hotspot;

- Make sure it is 64-bit

- Make sure you install it in the root C:java Windows .

- During installation after changing the path, select setting Path

Download winutils and put it in C:hadoopbinhttps://github.com/cdarlint/winutils/blob/master/hadoop-2.7.3/bin/winutils.exe;

Download Anaconda 3.6 from Archive: https://repo.anaconda.com/archive/Anaconda3-2020.02-Windows-x86_64.exe;

Download Apache Spark 3.1.1 and extract it in C:spark

Set the env for HADOOP_HOME to C:hadoop and SPARK_HOME to C:spark

Set Paths for %HADOOP_HOME%bin and %SPARK_HOME%bin

Install C++ https://www.microsoft.com/en-us/download/confirmation.aspx?id=14632

Create C:temp and C:temphive

- Fix permissions:

- C:Usersmaz>%HADOOP_HOME%binwinutils.exe chmod 777 /tmp/hive

- C:Usersmaz>%HADOOP_HOME%binwinutils.exe chmod 777 /tmp/

Either create a conda env for python 3.6, install pyspark3.1.1 spark-nlp numpy and use Jupyter/python console, or in the same conda env you can go to spark bin for pyspark –packages com.johnsnowlabs.nlp:spark-nlp_2.12:3.0.2.

Offline

Spark NLP library and all the pre-trained models/pipelines can be used entirely offline with no access to the Internet. If you are behind a proxy or a firewall with no access to the Maven repository (to download packages) or/and no access to S3 (to automatically download models and pipelines), you can simply follow the instructions to have Spark NLP without any limitations offline:

- Instead of using the Maven package, you need to load our Fat JAR

- Instead of using PretrainedPipeline for pretrained pipelines or the

.pretrained()function to download pretrained models, you will need to manually download your pipeline/model from Models Hub, extract it, and load it.

Example of SparkSession with Fat JAR to have Spark NLP offline:

- You can download provided Fat JARs from each release notes, please pay attention to pick the one that suits your environment depending on the device (CPU/GPU) and Apache Spark version (2.3.x, 2.4.x, and 3.x)

- If you are local, you can load the Fat JAR from your local FileSystem, however, if you are in a cluster setup you need to put the Fat JAR on a distributed FileSystem such as HDFS, DBFS, S3, etc. (i.e.,

hdfs:///tmp/spark-nlp-assembly-3.0.2.jar)

Example of using pretrained Models and Pipelines in offline:

Spark Scala Documentation

- Since you are downloading and loading models/pipelines manually, this means Spark NLP is not downloading the most recent and compatible models/pipelines for you. Choosing the right model/pipeline is on you

- If you are local, you can load the model/pipeline from your local FileSystem, however, if you are in a cluster setup you need to put the model/pipeline on a distributed FileSystem such as HDFS, DBFS, S3, etc. (i.e.,

hdfs:///tmp/explain_document_dl_en_2.0.2_2.4_1556530585689/)